Suffer from the proliferation of the species problem.

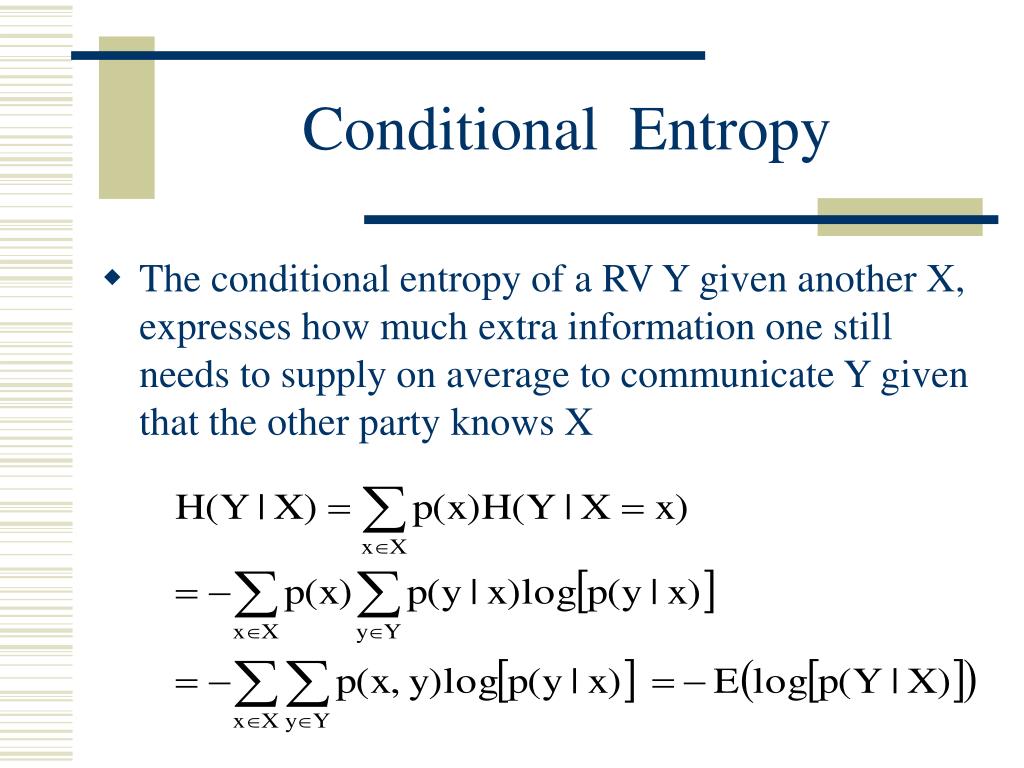

This formulation the bound holds automatically, and in particular it does not Accompanying Shannon entropy is the concept of relative entropy, defined as follows: Given probability distributions p and r on n elements, the entropy of p relative to r is H(pr) i: pi > 0pilogpi ri 0. Let M M be a closed manifold and P P the set of Borel probability measures on M M. Following is the definition we will use in this question. There are several different definitions of relative entropy, and some of them are not equivalent. That this version arises naturally from the original derivation of the boundįrom the generalized second law when quantum effects are taken into account. Convexity and semicontinuity of the relative entropy function. Since relative entropy is sensitive to the higher-order moments of a distribution, and not just changes in the mean and variance, it has the major advantage of more completely capturing probabilistic information 8. The adequate interpretation, the positivity of the relative entropy in thisĬase constitutes a well defined statement of the bound in flat space. Relative entropy techniques are robust, compelling, and can be applied to many physical situations. Vacuum and another state, both reduced to a local region. Specifically, we consider the relative entropy between the In quantum field theory and statistical mechanics to the Bekenstein universalīound on entropy. This study applies relative entropy in naturalistic large-scale corpus to calculate the difference among L2 (second language) learners at different levels. Casini Download PDF Abstract: Elaborating on a previous work by Marolf et al, we relate some exact results The degree to which optimality is approached by simple learning rules in current use is considered, and it is found, in particular, that the algorithm adopted in the Hopfield model is more effective in minimizing G than the original Hebb law.Download a PDF of the paper titled Relative entropy and the Bekenstein bound, by H. Relative entropy is a method which quantifies the extent of the configurational phase-space overlap between two molecular ensembles 20. Minimization of G subject to appropriate resource constraints leads to ‘‘optimal’’ learning rules for pairwise and higher-order neuronal interactions. Examples are entropy, mutual information, conditional entropy, conditional information, and relative entropy (discrimination, Kullback-Leibler information), along with the limiting normalized versions of these quantities such as entropy rate and information rate. The relative entropy G of the probability distribution δ x ( s + 1 ), x ’ concentrated at the desired successor state, evaluated with respect to the dynamical distribution ν( x ’‖ x ( s )), is used to quantify this criterion, by providing a measure of the distance between actual and ideal probability distributions. A successful procedure for learning this pattern must modify the neuronal interactions in such a way that the dynamical successor of x ( s ) is likely to be x ( s + 1 ), with x ( l + 1 )= x ( 1 ). The minimum relative entropy (MRE) spectral analysis, also called minimum cross-entropy spectral analysis, was developed by Shore (1979, 1981) with spectral power as a random variable. A prescribed memory or behavior pattern is represented in terms of an ordered sequence of network states x ( 1 ), x ( 2 ). The dynamics of a probabilistic neural network is characterized by the distribution ν( x ’‖ x) of successor states x ’ of an arbitrary state x of the network.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed